Senior Research Project

Senior year of high-school I pursued a machine vision research project in the Computer Systems Lab. The application took a top view of a chess board from a webcam, and as it watched the game it recorded the moves being made in standard chess notation. At the end of the year, I presented my results at a symposium and wrote a paper on my findings. Over the course of the project I learned a lot about the challenges associated with machine vision. In its final form, the application was a working demo from start to finish, although it lacked the commercial robustness I had originally aimed for.

Powerpoint Presentation

Final Paper

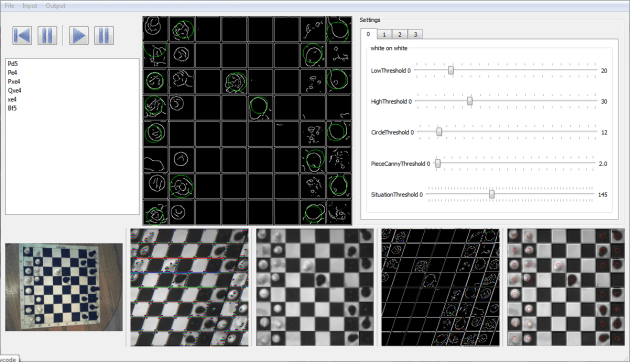

One of the later user interfaces.

Chess Heuristic Event Scribe System

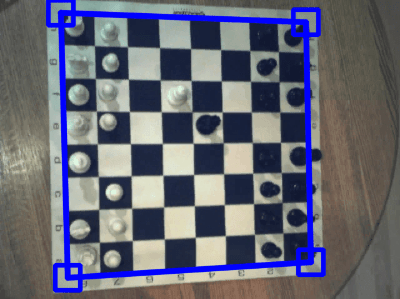

The input form the webcam is a skewed image of the chessboard. Although I had initially looked into automatically detecting the chessboard in the image, I ended up having the user define the bounds of the board for simplicity and robustness.

|

|

| We get a raw, skewed image from the webcam. | The user defines the bounds of the board. |

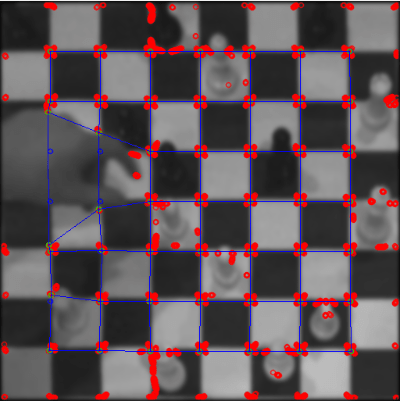

The program transforms the pixels inside the bounds into a square image. This provides approximately a “top-down” view of the chessboard. I then put the image through a corner detection algorithm (specifically a Harris corner detector) to find the corners of the squares on the board. This allowed the algorithm to detect whether or not there was an object (i.e. a hand) in the way of the board. If the board was obscured, the program dropped the frame.

|

|

| We run a corner detection algorithm on the transformed image. | This helps detect when the board is obscured. |

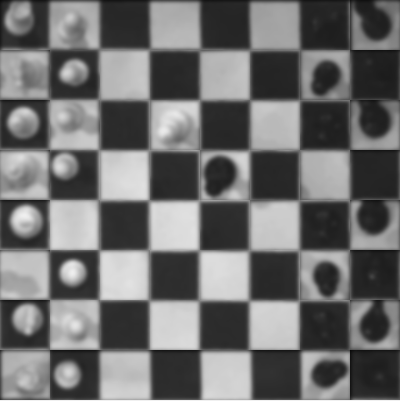

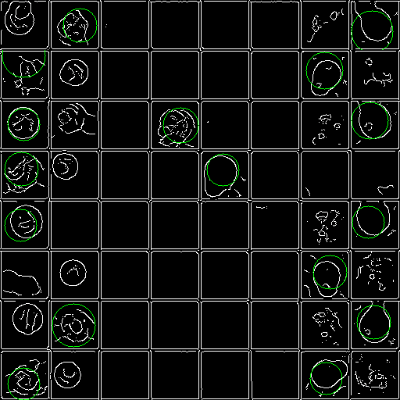

For unobscured frames, the program then broke down the board image into individual squares to analyze. The image was divided along the blue lines visualized above, with each quadrilateral area of pixels transformed into a square image, just like had happened with the whole board image before.

|

|

| A collage of all the individual squares as used for analysis. | A visualization of the image after a Canny edge pass. |

In order to actually find which squares were occupied, I first had each image run through a Canny edge detector. I realized, however, that the difference in lighting between different square situations was significant. That is, a black piece on a black square is much harder to detect than a black piece on a white square. So I first determined the color of each square by averaging the color of each square. The average closest to pure white or black became my baseline, and I extrapolated from there. Each square was slotted into one of the four potential piece/square color combinations based on its average.

Based on the piece/square color combo, the Canny edge detector used different settings. This provided a fairly consistent output despite lighting conditions. The program then ran the image through a Hough circle detector (with restrictions on radius and position), and through a combination of counting edge pixels and presence of a circle decided whether or not a piece was on that square.

The final output, showing detected square color and piece location.

The final step was to track piece positions throughout the game. In the end, due to time restrictions, I merely had the algorithm track piece movements rather than piece types. That is, the program couldn’t figure out which piece was a knight and which was a pawn. It needed the initial board layout to be provided.

One of the biggest remaining research directions left open at the end was expanding the algorithm to deal with a wider range of lighting conditions. The program was fairly picky about lighting and color, and throughout the project I struggled with getting a good contrast in the black areas of the image. Although the algorithm never reached commercial-level robustness, I learned a lot both about research and about machine vision during the project. A lot of time in research, I realized, is spent reading and exploring paths which never pan out and turn out to be dead-ends. Without experiencing this first-hand, it is hard to grasp how much effort can’t be seen in a final product.