Portfolio

Email: mattlevonian@gmail.com

Download my resume.

Battlefield Mobile (2022)

Learn more here.

Puzzle Bobble VR: Vacation Odyssey (2020)

Battlewake (2019)

Click here to read more.

Glimmer Grove (2019)

Click here to read more.

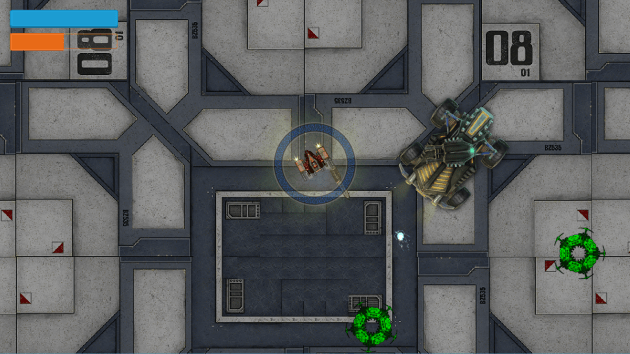

Sky Command (2017-2018)

Click here to read more.

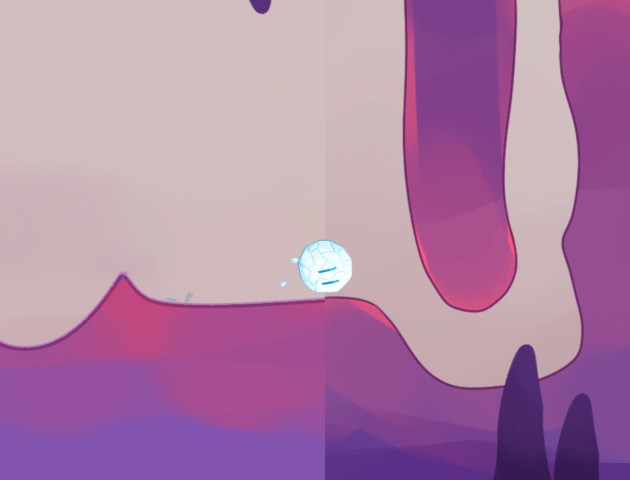

From Light (2015-2017)

(website)

Click here to read more.

Sky Command (2016)

Galactic Steel (2015)

Possession (2015)

The game website is no longer up, but you can see some of it through the Wayback Machine.

ll give you traffic guarantees on each of them.

Let me demonstrate how it works and you will be surprised by the results.

LikeLike